LinkedIn is starting to get flooded with posts by recruiters and technical leaders sharing the same unsettling experience: ending up on live calls with candidates using real-time video and voice AI filters.

The signs are subtle at first: lips that barely move, flat or emotionless reactions, and what appears to be a poor internet connection. Another big red flag is that the candidate’s profile often feels too perfect.

The scammers primarily target IT companies hiring for remote positions. They use AI to create the ideal candidate for an open role, crafting polished resumes, convincing LinkedIn profiles with professional headshots, and even entire websites. Once hired, they gain access to internal systems — opening the door to data exfiltration, extortion, or malware deployment.

Not every fake applicant is an outright scammer, though. Some are real job seekers trying to bypass restrictions they consider unfair, such as geographic hiring limits, visa barriers, sanctions, or compensation gaps between markets. Regardless of their motivations, as AI lowers the cost of deception, HR professionals need to be prepared to spot inconsistencies.

According to Pindrop, a security start-up, one in six job applicants was fake in 2025. The trend is expected to worsen: by 2028, one in four candidate profiles worldwide will be fraudulent, according to research and advisory firm Gartner.

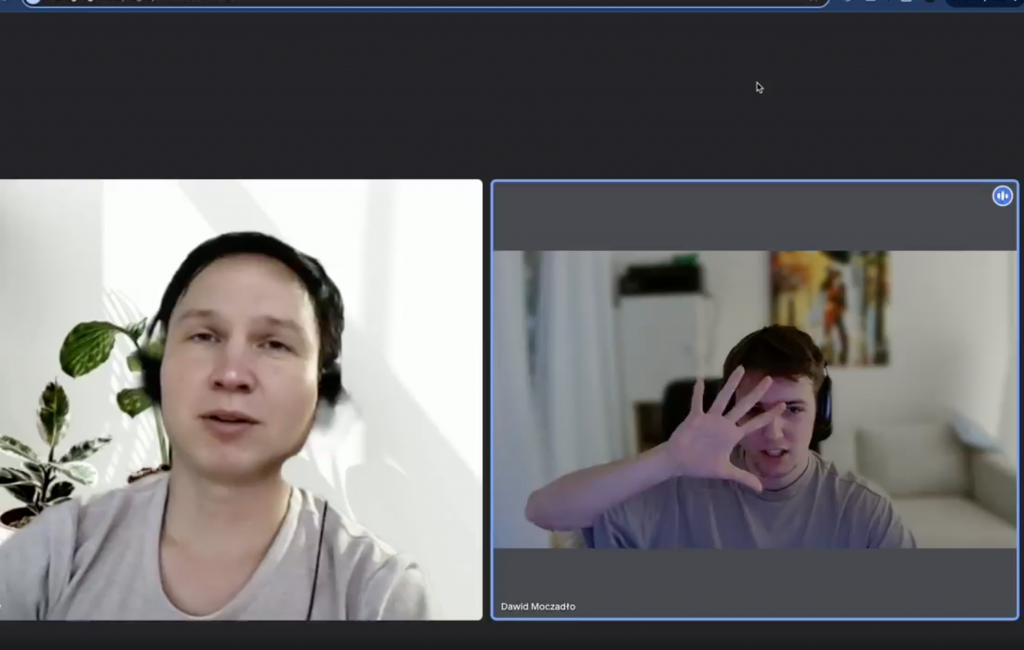

Dawid Moczadło, Co-Founder of the code security platform Vidoc, was among the first to share the experience of unknowingly interviewing a fake candidate. He recounted that he ended the interview after the applicant refused to follow a simple request: to place their hand in front of their face — this action would reveal whether the candidate was using a filter.

“I felt a little bit violated, because we are the security experts,” Moczadlo told CBS. The incident prompted changes to Vidoc’s hiring process, making it more costly. The company now arranges for candidates to fly in for in-person interviews, covering their travel expenses and compensating them for a full day of work. The decision taken by Vidoc reflects a broader trend: as previously reported by The Wall Street Journal, the rise of AI is driving a return to in-person interviews.

After the incident, Vidoc also published a guide to help HR professionals across industries spot potentially fraudulent applicants. What started as a breach became gated content — and ultimately, a business opportunity. Not every company will get that lucky, though. Below, we list some of the key tips from Vidoc’s guide.

How to detect a fake applicant

Fake applicants can have highly polished LinkedIn profiles: 500+ connections, shared contacts with you, and a seemingly solid history. One thing they can’t fake, though, is the profile’s creation date. To check this, click on the “More” button on their profile, then select “About this profile.” There, you’ll see when the account was created, along with other useful details.

During video interviews, pay close attention to audio-video sync issues. If something feels off, you can start asking questions to verify the candidate’s claims. For example, inquire about their current location, nearby landmarks like cafés, or even the local weather — you can quickly cross-check their answers online to see if they align.

Importantly, coding tests won’t necessarily flag a fraudulent applicant, as they can still possess strong technical skills. The real concern here isn’t so much about competence as it is about trust. Unlike regular candidates, a fraudulent applicant may not be willing to use their skills in your best interests. You want to avoid hiring someone who could deliberately misuse their access to your systems.

Now, if the interview becomes really suspicious, you can always ask the candidate to place a hand in front of their face and observe how they respond.

Get more content like this!

Enjoying our content? Subscribe to the TechTalents Insights newsletter to start getting our best articles and interviews delivered to your inbox.